I built a self-hosted alternative to Google's Video Intelligence API after spending about $450.

I have over 2TB of personal video footage accumulated over the years — mostly outdoor GoPro footage, family trips, random clips I always meant to do something with. Finding a specific moment in that library was effectively impossible. Not hard. Impossible.

Imagine trying to find "that scene where I was riding a bike and laughing" across thousands of unlabeled video files. You can't keyword-search a .MOV file. You can't ctrl+F a memory. So I started scrubbing. And scrubbing. And eventually gave up.

First, I tried Google Cloud Video Intelligence API

Google Cloud Video Intelligence API looked perfect on paper. It transcribes audio, detects objects, recognizes faces, and returns structured metadata you can query. I ran it on a few videos to test. It worked beautifully.

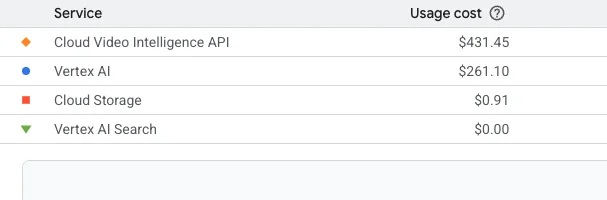

Then I got the bill.

$450 for a few test videos. Scaling to my entire 2TB library would cost well over $1,500 — and I'd have to upload all of my raw personal footage to Google's cloud. NDA footage, family videos, unreleased content. That wasn't happening.

So I built the same thing locally

I spent a few weekends building Edit Mind — a completely self-hosted video analysis tool that runs entirely on your own hardware. No cloud. No upload. No bill at the end of the month.

Here's what it does:

- Indexes videos locally: Transcribes audio, detects objects (YOLOv8), recognizes faces, analyzes emotions

- Semantic search: Type "scenes where @john is happy near a campfire" and get instant results

- Zero cloud dependency: Your raw videos never leave your machine

- Vector database: Uses ChromaDB locally to store metadata and enable semantic search

- NLP query parsing: Converts natural language to structured queries (Gemini by default, Ollama supported for fully offline)

- Rough cut generation: Select scenes and export as video + FCPXML for Final Cut Pro

How it works

The technical stack

- Electron app: Cross-platform desktop (Mac + Windows)

- Python backend: ML processing with face_recognition, YOLOv8, FER

- ChromaDB: Local vector storage — no cloud database

- FFmpeg: Video processing and frame extraction

- Plugin architecture: Easy to extend with custom analyzers

Why self-hosting wins here

- Privacy: Your footage stays on your hardware — safe for NDAs, unreleased content, personal archives

- Cost: Free after setup (vs $0.10/min on GCP)

- Speed: No upload/download bottlenecks — results are instant after indexing

- Customization: Plugin system for custom analyzers

- Offline capable: Swap Gemini for Ollama and it runs 100% air-gapped

Current limitations (being honest)

- –Needs decent hardware — GPU recommended, but CPU works

- –Face recognition requires initial training (adding known faces before search)

- –First-time indexing is slow — plan for it to run overnight on large libraries

- –Query parsing uses Gemini API by default (easily swapped for Ollama for full offline)

Who this is for

I can't be the only person drowning in video files. This was built for anyone with a large video library who wants semantic search without cloud costs or privacy trade-offs:

- →Video editors and content creators with large footage libraries

- →Documentary makers working with hours of raw interviews

- →Parents with years of family footage they can never find anything in

- →Security camera operators who need to find specific incidents

- →Anyone who's ever said 'I know I filmed this, I just can't find it'

Self-host it free via Docker, or pre-order the desktop app for a plug-and-play experience with Final Cut Pro and DaVinci Resolve integration.

Lifetime license · Pre-orders only · Ships Q3 2026